Very important reminder: the tip below will explain how to remove an item from the index, yet this does not prevent it from being picked up during the next crawl (full/incremental). In order to prevent the item from being crawled again additional steps must be taken (such as creating a crawl rule for the item). Big thanks to Mikael Svenson for the reminder!

Earlier today I posted an article on Microsoft Learning’s Born to Learn website, and I thought it would be an interesting article for the audience here as well. The article covers how to force FAST Search for SharePoint to remove an item from the index immediately, without having to wait until the next crawl.

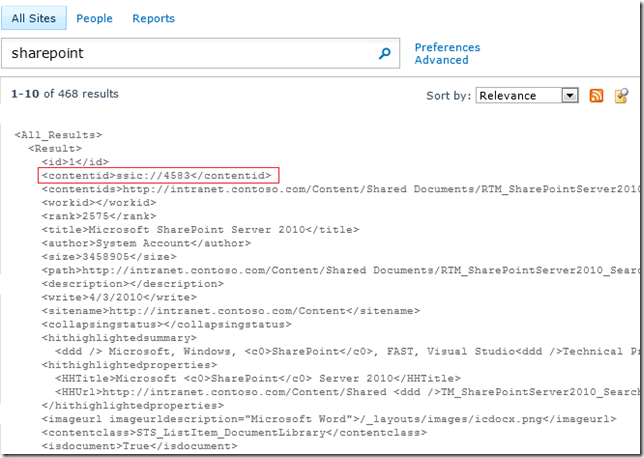

In the article linked above I showed how to obtain the Item ID (or ssic://<id>) using the Crawl Logs, so here in this post I will show how to obtain it directly through the Search Center. This will also be a neat way to show you how to get the raw XML for the search results, which is very helpful when you are troubleshooting issues with your search results (such as to confirm that some property is being returned properly).

The first thing you need to do is execute a query that returns the item you want to remove from the index. Next you will need to customize the Search Core Results Web Part with this XSL (obtained from this MSDN article on how to get XML search results):

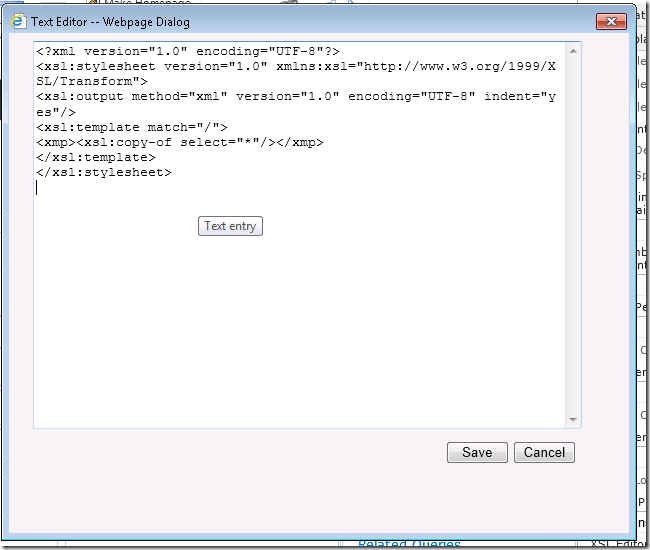

<?xml version="1.0" encoding="UTF-8"?> <xsl:stylesheet version="1.0" xmlns:xsl="http://www.w3.org/1999/XSL/Transform"> <xsl:output method="xml" version="1.0" encoding="UTF-8" indent="yes"/> <xsl:template match="/"> <xmp><xsl:copy-of select="*"/></xmp> </xsl:template> </xsl:stylesheet>

These are the detailed steps to configure the Search Core Results Web Part to use this XSL as well as return the additional property we need to run the command to remove the item from the index:

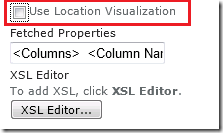

1) While editing the Search Core Results Web Part, uncheck the option “Use Location Visualization” under Display Properties

2) Click to open the XSL Editor and replace all the contents of the XSL entered there with the XSL listed above

3) Edit the Fetched Properties parameter, adding the following entry right after the opening <Columns> tag (and before the </Columns> tag): <Column Name=”contentid”/>

4) Confirm the changes to the web part, save the page and check the new output of your search results page showing the full XML with the additional properties you wanted

Now, with the contentid in hands we can execute the command to remove this item from the index:

docpush -c sp -U -d ssic://4583

And with that you have a way to remove items from the FAST Search for SharePoint index whenever you need. It does take some work, but at least now you have a way to accomplish that. ![]()

Nice post, and a very handy trick!

While it’s certainly a quick way to get a document out from the index, we have to hope the item is not modified in any way, because then it will re-enter the index on the next incremental crawl 🙂

Hopefully we will get a fool-proof way of black-listing certain documents in the future, or have them not being indexed based on some arbitrary rule. I know we can use docpush magic today for this, but a bit cumbersome.

That’s a great point, Mikael! I completely forgot to add this information while I was doing the post, but I will put an addendum with the reminder that any items removed should also be blocked from being crawled again (either through a crawler rule or any other way).

Thanks again for the reminder 🙂

–Leo

Hi Leo,

Your post is interesting and useful. But i would like to know how you can archive blocking Items from crawling again. I cannot find any hints to use crawl rules to block them 😦

Keith

Hi Keith!

It’s been such a long time (5+ years) since I last did anything with FAST Search for SharePoint that I can’t recall if any easier way to blacklist items was ever added to the software. It does sound like crawl rules (https://technet.microsoft.com/en-us/library/ff473168%28v=office.14%29.aspx?f=255&MSPPError=-2147217396#procedure1) would be the way to go, but they can indeed be quite cumbersome if you have multiple items you want to blacklist, each with their own url pattern.

Good luck there!

Best,

Leo

I used to read you blog habitually, I can’t believe I ever stopped! Now I remember what got me captivated overall.

Thank you, Tony! I always had a lot of fun writing this blog. It is wonderful to know that it was helpful to others 🙂

Best,

Leo